Pickman: Decomposing Mega-Merges

Introduction

Pickman is a tool for cherry-picking patches from upstream U-Boot into a downstream branch. It walks the first-parent chain of the source branch, finds merge commits, and cherry-picks their contents one merge at a time using an AI agent.

This works well for normal merges containing a handful of commits. But upstream U-Boot occasionally has mega-merges — large merges whose second parent itself contains sub-merges. A single Merge branch ‘next’ can pull in dozens of sub-merges, each with their own set of commits, totalling hundreds of patches.

Feeding all of these to the AI agent at once overwhelms it. The context window fills up, conflicts become harder to diagnose, and a single failure can require restarting a very large batch. A better approach is to break the mega-merge into smaller pieces and process them one at a time.

The problem

Consider a typical mega-merge on the first-parent chain:

A---B---M---C---D (first-parent / mainline)

|

S1--S2--S3 (second parent, containing sub-merges)

When pickman encounters merge M, it collects all commits in prev..M and hands them to the agent. For a normal merge this is fine, a few commits at most. But when S1, S2 and S3 are themselves merges, each containing many commits, the batch can be very large.

The solution

Two new functions handle this:

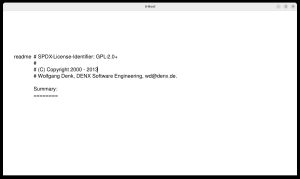

detect_sub_merges(merge_hash)– examines the second parent’s first-parent chain to find merge commits (sub-merges) within a larger merge. Returns a list of sub-merge hashes in chronological order, or an empty list if no sub-merges are present.decompose_mega_merge(dbs, prev_commit, merge_hash, sub_merges)– returns the next unprocessed batch from a mega-merge.

The second function handles three phases:

- Mainline commits — commits between the previous position and the merge’s first parent (prev..M^1). These are on the mainline and are not part of any sub-merge.

- Sub-merge batches — one sub-merge at a time, skipping any whose commits are already tracked in the database. Each call returns the commits for the next unprocessed sub-merge.

- Remainder commits — any commits after the last sub-merge on the second parent’s chain.

The function tracks progress via the database: commits that have been applied (or are pending in an open MR) are skipped automatically. On the next invocation, pickman picks up where it left off.

The advance_to field in NextCommitsInfo tells the caller whether to update the source position. When processing sub-merge batches, it is None (stay put, more batches to come). When the mega-merge is fully processed, it contains the merge hash so the source advances past it.

The next-merges command also benefits: it now expands mega-merges in its output, showing each sub-merge with a numbered index:

Next merges from us/next (15 from 3 first-parent):

abc1234abcd Merge branch 'next' (12 sub-merges):

1. def5678defg Merge branch 'dm-next'

2. 1112222abcd Merge branch 'video-next'

...

13. 333aaaa4444 Merge branch 'minor-fixes'

14. 444bbbb5555 Merge branch 'doc-updates'

Rewind support

When something goes wrong partway through a mega-merge, it helps to be able to step backwards. The new rewind command walks back N merges on the first-parent chain from the current source position, deletes the corresponding commits from the database, and resets the source to the earlier position.

By default it performs a dry run, showing what would happen:

$ pickman rewind us/next 2

[dry run] Rewind 'us/next': abc123456789 -> def567890123

Target: def5678901 Merge branch 'dm-next'

Merges being rewound:

abc1234abcd Merge branch 'video-next'

bbb2345bcde Merge branch 'usb-next'

Commits to delete from database: 47

Use --force to execute this rewind

It also identifies any open MRs on GitLab whose cherry-pick branches correspond to commits in the rewound range, so that these can be cleaned up (normally deleted).

Summary

These changes allow pickman to handle the full range of upstream merge topologies with less manual intervention. Normal merges continue to work as before. Mega-merges are transparently decomposed into manageable batches, with progress tracked across runs and the ability to rewind when needed.